Effect of Directional Strategy on Audibility of Sounds in the Environment for Varying Hearing Loss Severity

Reprinted with kind permission from ReSound

Abstract

Directional microphones in hearing aids have been well-documented to improve speech recognition in noise in laboratory conditions. The real-world perceived benefits of directionality have been less dramatic. The development of directional technology during the past decade has focused on improving laboratory benefit by means of adaptive behavior, and more recently, binaural beamforming made possible by ear-to-ear audio streaming. In contrast, ReSound has pursued a strategy for applying directional technology that takes advantage of auditory processing by the brain, with the goal of optimizing real-world benefit. In this study, ReSound Binaural Directionality III is compared to two commercially available binaural beamformers to explore the possible advantages and disadvantages of these very different approaches to applying hearing aid directionality.

Introduction

Directional microphones amplify sound coming from a particular direction relatively more than sounds coming from other directions. They are the only hearing aid technology proven to improve speech understanding in noisy situations. However, some conditions must be met in order to benefit from a directional microphone. For one thing, the signal of interest must be spatially separated from the noise sources. In addition, the signal of interest must be located within the directional beam and should be within two meters of the listener. Directional microphones in hearing aids are designed to have a forward-facing beam as worn on the head. This means that the hearing aid wearer must face what they want to listen to. By constructing a test environment that fulfills these conditions, the benefit of directional microphones in hearing aids is easily demonstrated. However, real-world environments bear little resemblance to contrived laboratory test environments. Real listening environments are unpredictable in terms of the acoustics, the type and location of the sounds of interest, and the type and location of interfering noises. To complicate matters further, any of these sounds may move, and the listener may want to shift attention from one sound to another. A sound that is the signal of interest one moment may be the interfering noise the next.

It has been observed that the benefit of directional microphones is not perceived to the degree that laboratory tests of directional benefit would imply1. There are numerous acoustic and personal intrinsic factors that play a role in this discrepancy. An additional factor is simply that directional microphones can interfere with audibility of the sound of interest when it does not originate from the direction that the hearing aid wearer is facing. It is assumed that individuals wearing directional hearing aids will orient their heads toward what they want to hear. However, in daily life it is not at all unusual to listen to sounds that one is not facing. In fact, it has been shown that more than 30% of adults’ active listening time is spent attending to sounds that are not in front, where there are multiple target sounds, where the sounds are moving, or any combination of these2.

ReSound has taken an unconventional approach to applying directionality that considers both the advantages and disadvantages of this type of technology. Binaural Directionality III leverages the brain’s ability to compare and contrast the separate inputs from each ear to form an auditory image of the listening environment. By providing access to an improved signal-to-noise ratio (SNR) for sounds in front while maintaining audibility for sounds not in front, Binaural Directionality III allows hearing aid wearers to focus on specific sounds, to stay connected to their auditory environment, and to shift their attention at will 3,4. The Binaural Directionality III strategy controls the microphone mode of each hearing aid depending on the presence of speech and noise in the environment, as well as the direction-of-arrival of the speech. The possible configurations that can result include bilateral Spatial Sense, bilateral directionality, asymmetric directionality with directionality on the right side, and asymmetric directionality with directionality on the left side5. The rationale behind Binaural Directionality III contrasts sharply with the advanced directional technologies in other premium hearing aids. The focus of development of those technologies has been to maximize SNR improvement in laboratory environments. The most recent advancement in this area is to use an array of all 4 microphones on two bilaterally worn dual microphone hearing aids to attain a greater degree of directionality, commonly referred to as binaural beamforming. A binaural beamformer creates one monaural signal which is delivered to both ears. While there may be additional features that attempt to preserve some cues for localization, the overall effect of this approach is to eliminate the contrasts in the per ear acoustic signals that enable binaural hearing. Speech-in-noise testing under laboratory conditions has shown either modest or insignificant improvements in directional benefit for speech in front compared to traditional directionality 6,7. The lack of greater differences is perhaps related to the unavailability of binaural hearing cues that is a result of binaural beamforming.

How big of a trade-off is being made for the additional benefit provided by binaural beamformers? In other words, how does the advantage of a modest improvement in speech recognition in noise for speech in front weigh against possible disadvantages of reduced audibility for speech coming from other directions? To begin to answer this question, it is of interest to explore how performance on speech recognition in noise under laboratory conditions is affected when speech arises from varying directions. The investigation described in this paper compared performance for participants fitted with ReSound hearing aids with Binaural Directionality III and two commercially available premium hearing aids with binaural beamforming.

The research questions were:

- Is there a difference in speech recognition in noise for speech in front for Binaural Directionality III versus binaural beamformers and, if so, how great?

- Is there a difference in speech recognition in noise for speech from the side or behind for Binaural Directionality III versus binaural beamformers and, if so, how great?

- Are results dependent on hearing loss severity?

Methods

Subjects

Ten hearing-impaired individuals with moderate bilateral hearing loss participated in part 1 of this test. Seven individuals with severe-to-profound hearing loss participated in part 2 of this test.

Hearing instruments and fitting

The hearing aids tested were ReSound BTEs and super power BTEs with Binaural Directionality III. Premium BTE and super power BTE hearing aids from two other manufacturers that use binaural beamforming (hereafter referred to as “Hearing Aid A” and “Hearing Aid B”) were used and the experiment was split in two parts depending on hearing loss severity. Part 1 participants had moderate hearing loss and were fit with standard BTEs, and part 2 participants had severe-to-profound hearing loss and were fit with super power BTEs.

The three test instruments were fit to the NAL-NL2 gain prescription for each participant’s individual audiogram in order to rule out gain prescription differences as a source of any observed differences. The gains of the other manu facturers’ hearing instruments were fine-tuned to match the gains of the ReSound hearing instruments. This was done in a testbox using the ISTS signal at 65 dB SPL. When possible, the gains were matched to within +/- 2 dB of the ReSound hearing instruments for all frequencies between 500 and 3000 Hz.

The hearing instruments were fitted with Binaural Direc tionality III for the ReSound hearing aids, and with the binaural beamforming active in the other hearing aids. All other settings were left at the manufacturers’ defaults.

Test material and setup

The test participants completed a speech-intelligibility listening test based on the Danish open-set speech corpus for competing-speech studies8. This test will hereafter be referred to as the “DAT” test. It is an adaptive test that results in a SNR at the speech reception threshold (SRT). In this test, both the signal and the competing noise are individual talkers, which is different than many other adaptive speech-in-noise tests that use speech-shaped noise or speech babble noise as the masker. A test with individual talkers as the competing signals is exceptionally challenging, as there is informational as well as energetic masking taking place. Because the competing speech is intelligible, the DAT test may be more representative of a real-world situation than typical speech-in-noise tests. The speech corpus contains three sets of 200 unique Danish sentences. The sentences are composed of a fixed carrier sentence with two interchangeable target words:

”[Name] thought about [noun] and [noun] yesterday”

”Name” represents the call sign, and each blank represents a unique noun. The nouns are in singular form and include the Danish indefinite articles (“en” and “et”) before each noun.

Examples of sentences include (English/Danish):

- Dagmar thought about a rescue and a suitcase yesterday/Dagmar tænkte på en redning og en kuffert i går.

- Dagmar thought about a predator and a toe yesterday/Dagmar tænkte på et rovdyr og en tå i går.

Each of the three sets of 200 sentences is spoken by a different female talker and starts with a specific name. The names are Dagmar, Asta, and Tine. The name of this test, “DAT”, is a reference to the first initial of each of their names.

The task of the test participants in this study was to listen for and repeat the target nouns of the sentences that start with the name “Dagmar”. The “Asta” and “Tine” sentences comprised the maskers. In part 1 of the experiment, which included the participants with moderate hearing loss, the maskers were played simultaneously from other loud speakers while the “Dagmar” sentence was played at 65 dB SPL. On each trial, two masker sentences were randomly selected from the two sets of 200 masker sentences. The masker sentences were also presented at 65 dB SPL ini tially. When the test participants were able to repeat both of the target nouns in a “Dagmar” sentence successfully, the sound pressure level of the maskers was raised by 2 dB. If one or none of the target nouns was identified correctly, the sound pressure level of the maskers was lowered by 2 dB. The test participants did not receive any feedback concerning whether their responses were correct or incor rect. In part 2 of the experiment, which included the par ticipants with severe-to-profound hearing loss, both the target and masker sentences were presented at 70 dB SPL for all trials, and the number of target words correct was noted.

Because the duration of all of the recorded sentences is naturally slightly different, time expansion or compression was applied to each of the masker sentences on each trial so that they precisely matched the length of the target sentence. The time expansion and compression was done using the speech analysis program PRAAT9. Because the sentence lists are not all of equal difficulty, an attempt was made to balance the difficulty so that the influence of this across all test participants was reduced.

All of the hearing aids tested have adaptive features that rely on identification of speech and noise in the environ ment. Therefore, an attempt was made to ensure that adaptive features would engage. In part 1, speech-shaped noise from the Dantale II test10 was played at a level of 45 dB SPL in addition to the DAT corpus. The speech-shaped noise was played from loudspeakers directly to the left, di rectly behind, and directly to the right of the test subject. Furthermore, the ISTS signal was played at 65 dB SPL from the front loudspeaker throughout the duration of the test with only brief pauses while the target and masker sen tences were played. Participants with severe-to-profound hearing loss were not presented with the speech-shaped noise or ISTS signal, as it was not possible for them to com plete the testing with these competing sources. For them, only the three DAT sentences were presented on each trial. For each test condition in both part 1 and part 2, the ISTS signal and the Dantale II test noise were started thirty sec onds before the first trial in order to activate any adaptive settings in the hearing aids.

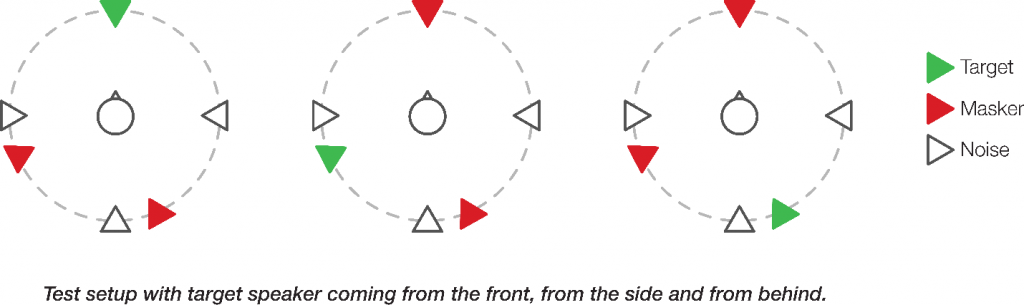

For each set of hearing instruments, three conditions were completed in which the target sentences came from three different loudspeakers. One condition was with the target sentences coming from the loudspeaker directly in front of the test participant, a second condition was with the target sentences coming from the left and slightly behind the test participant, and the third condition was with the target sentences coming from behind and slightly to the right of the test participant. The sequence of these conditions was counterbalanced among test participants. The maskers were played from the remaining two loudspeakers. The three test setups are illustrated in Figure 1.

Figure 1. Test setup with target speaker coming from the front, from the side and from behind. The noise was presented throughout testing for part 1 participants. It was presented for 30 seconds prior to each test condition for all participants in both part 1 and part 2 in order to activate adaptive features.

The test participants completed all three conditions in a row for each hearing aids. For example, three sentence lists with the ReSound hearing aids, three sentence lists with Hearing Aid A, and three sentence lists with Hearing Aid B. The sequence of the tested hearing aids was randomized for the test participants.

Each test participant completed three training lists prior to beginning data collection. The training was done while the test participant wore the first hearing aids to be used in the actual data collection for that test participant.

Results

For each of the three target-talker positions, statistical comparisons were performed between pairs of devices. The Tukey Honest Significant Difference statistical criterion was used for the comparisons.

Part 1: moderate hearing loss

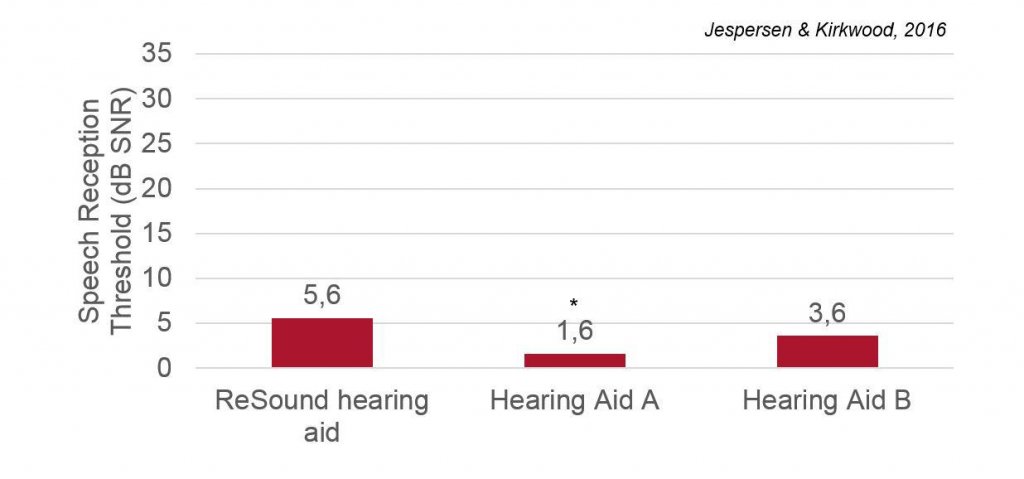

There was no significant difference between the SRTs obtained with the two hearing aids with binaural beamforming when the target talker was positioned in front of the test participant (p=0.23) as can be seen in Figure 2. Hearing Aid A performed significantly better than the ReSound hearing aid (p<0.01) when the target talker was in front of the test participant. There was no significant difference found between the ReSound hearing aid and Hearing Aid B.

Figure 2. Mean SRTs for 3 pairs of test instruments with target talker in front. Lower values are better.

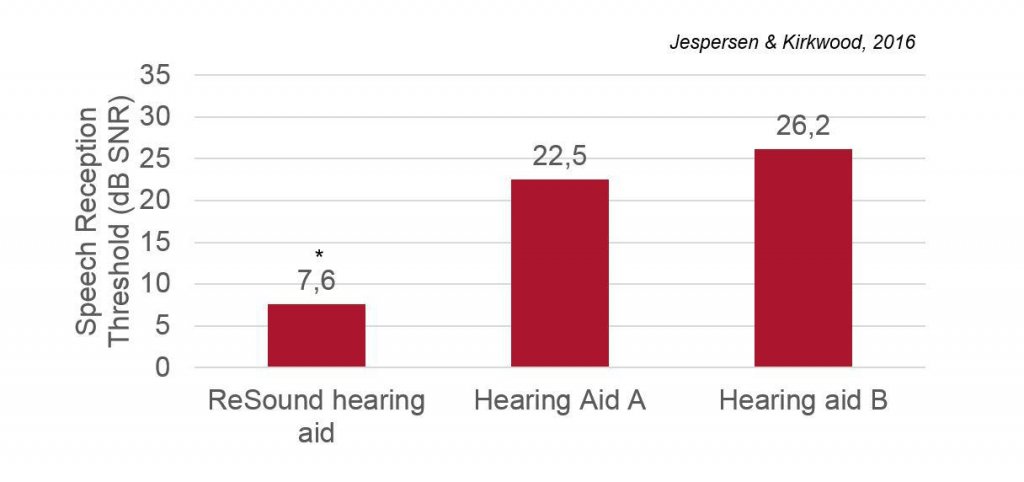

In the test setup with the target talker positioned to the left of the test participant there was no significant difference between the SRTs obtained with Hearing Aid A and Hearing Aid B (p=0.41). The SRTs obtained with the ReSound hearing aid were found to be significantly better than with Hearing Aid A (p<0.001) and Hearing Aid B (p<0.001) as can be seen in Figure 3.

Figure 3. Mean SRTs for the 3 pairs of test instruments with target talker to the left. Lower values are better.

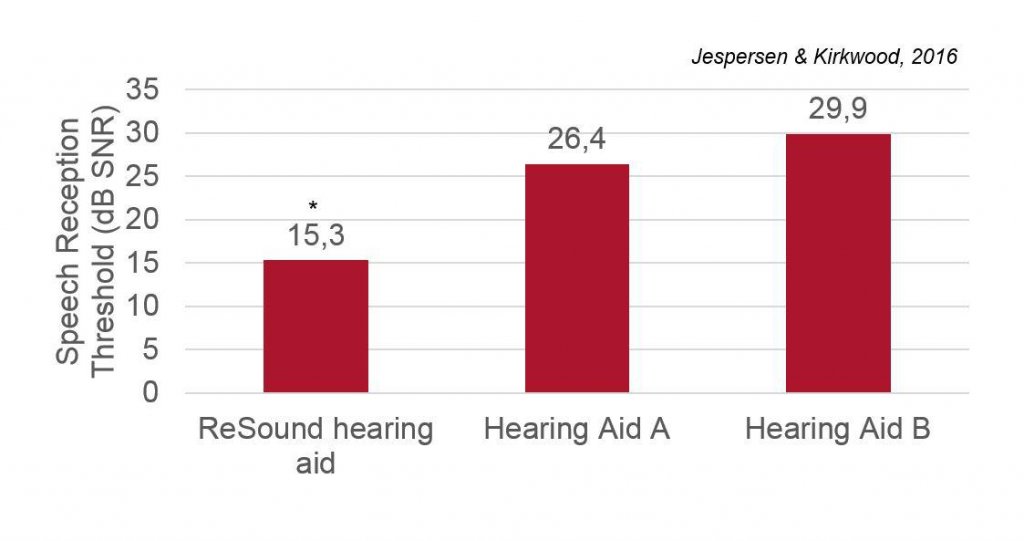

There was no significant difference between the SRTs obtained with Hearing Aid A and Hearing Aid B (p=0.44) when the target talker was positioned behind the test participant. Speech reception thresholds measured with the ReSound hearing aid were found to be significantly better than the other two for this condition. When the target talker was behind the test participant, performance in the ReSound hearing aid condition was highly significantly better than for Hearing Aid B (p<0.001) and Hearing Aid A (p<0.01) as shown in Figure 4.

Figure 4. Mean SRTs for the 3 pairs of test instruments with target talker from the behind. Lower values are better.

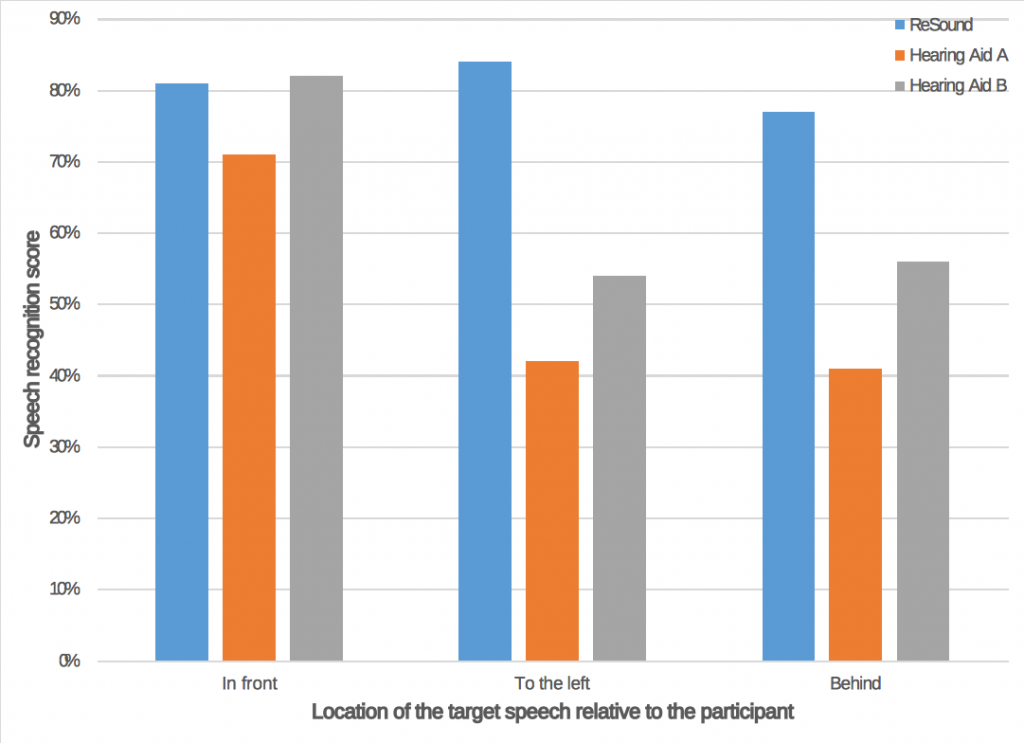

Part 2: severe-to-profound hearing loss

There was no significant difference in the percent correct obtained with Binaural Directionality III and either of the three test hearing aids with when the target talker origi nated from in front of the participant. Participants did sig nificantly poorer with Hearing Aid A than with Hearing Aid B in this condition (p<.05). In the two listening conditions where the target talker was to the left or behind the listen er, performance was markedly better with Binaural Direc tionality III than with the other two hearing aids (p

Figure 5. No performance differences were observed among the three hearing aids when the target speech was in front. Performance was signifi cantly better with the ReSound hearing aids with Binaural Directionality III when the target speech was from the side or behind the listener.

Discussion

Although the current test was done under laboratory conditions, it serves to illustrate how real-world functioning with directional hearing aids can be impacted. Test participants were required to monitor the sound around them, identify the target speech, and shift their attention to it. The target and the maskers were all single talkers, and the direction of the target talker continuously changed. In real-world listening environments, the ability to monitor the environment, become aware of and identify a sound of interest, and attend to that sound is often required to communicate well. Furthermore, speech is often both the sound of interest as well as the competing noise. A simple, everyday example is a family gathering. Family members engaging in lively conversation will quickly change turns talking and even talk over each other. There may be multiple conversations going on at once, and the topics of conversation shift rapidly. To be limited to hearing in one direction necessarily limits the ability to participate in such an environment.

In this study, we observed that, as expected, all three hear ing aids provided directional benefit for speech presented from in front. Furthermore, this was true regardless of hearing loss severity. Compared to the hearing aids using binaural beamforming, performance with the ReSound hearing aids with Binaural Directionality III was equivalent to performance with the other two hearing aids for the participants with severe-to-profound hearing loss. For the participants with moderate hearing loss, performance with Binaural Directionality III was 4 dB worse than Hearing Aid A, while there was no significant difference compared to Hearing Aid B. For this limited listening condition, these results support a slight advantage of binaural beamforming that may be dependent on the specific technology and perhaps hearing loss severity. However, when the target speech was presented from the side or back, performance with the ReSound hearing aids was dramatically better than either of the hearing aids with binaural beamforming regardless of hearing loss severity. Thus the disadvantage of lack of audibility for target sound that was not in front with the binaural beamformers was many orders of magnitude larger than the SNR advantage of binaural beamforming compared to Binaural Directionality III that was observed for those with moderate loss and Hearing Aid A. In other words, a lot of potential damage is done for a modest potential advantage when all everyday listening situations are considered.

Participants in this study were instructed to look forward during the test. That is, once the target speech in a particular trial was identified, they were not allowed to turn their heads toward it. One might argue that this represents an unnatural listening situation, and that in the real world people would, via head movements, orient to their listening environments and turn their heads toward what they want to hear. This is certainly true. Better performance for the target speech from the side and back would be expected if the participants had turned toward it. However, it is difficult to turn toward something that one cannot detect. The enormous SNR advantage of Binaural Directionality III over the binaural beamformers implies much greater awareness of off-axis sounds as well as better speech recognition. In other words, better performance with Binaural Directionality III would also be expected even if the participants had been allowed to turn their heads simply because they would have been able to detect and orient themselves to the target speech more easily and quickly.

The notion that directionality can interfere with natural orienting behavior when listening is supported by Brimijoin et al11. They asked participants to locate a particular talker in a background of speech babble and tracked their head movements. Participants were fit with directional microphones that provided either high or low in situ directionality. Their results showed that, not only did it take longer for listeners wearing highly directional microphones to locate the speaker of interest, but that they also exhibited larger head movements and even moved their heads away from the speaker of interest before locating the target. This longer, more complex search behavior could result in more of a new target signal being lost in situations such as a multitalker conversation in noisy restaurant.

Best et al12 added further support that a high degree of directionality can decrease a listener’s ability to find and attend to speech in the environment. They presented target speech at azimuths of 0°, +/- 22°, and +/-67.5°, and instructed listeners to locate and turn their heads toward the target speech. They compared performance with the participants using conventional directional processing and 2 different binaural beamformers. They found a small increase in performance of less than 5% speech understanding with the binaural beamformers versus conventional directionality as long as the speech was in front or at the 22° azimuths. When the target speech was presented at a wider angle, performance dropped for both conventional directionality and the binaural beamformers, but more dramatically for the latter. A decrement of approximately 15% was observed for the binaural beamformers relative to conventional directionality. This decrement probably reflects both the more effortful search behavior necessary to locate the speech as well as inability to properly orient the narrow directional beam when not looking to the front. The helpfulness of binaural beamformers in improving speech understanding in noisy situations is complicated by the unpredictability and complexity of real-life listening demands, and may in fact be detrimental depending on the user and the specific situation.

Even though testing was done under laboratory conditions, the results of the current study illustrate some of the trade-offs associated with the traditional school of thought regarding directionality and the way it is applied. A system that seeks only to maximize SNR improvement for sounds coming from in front may offer a slight advantage in the specific case for which it is designed, but provide very poor performance in other cases. For optimum benefit in the real world, the advantage for one particular use case should not cause even greater disadvantages for others. The ReSound approach to directionality seeks to strike the best balance between directional benefit and audibility of environmental sounds. In this way, hearing aid wearers can listen with the ear that has the best representation of what they would like to hear, yet the information is available for them to shift their attention if they would like. Binaural Directionality III provides access to an improved SNR, but without limiting the wearer’s ability to keep in touch with what is going on around them in the acoustic environment. Binaural hearing advantages arise from the brain’s ability to compare and contrast the different sounds being delivered from the right and left ears, and Binaural Directionality III supplies the brain with differentiated sound streams that allow for binaural hearing.

Conclusions

- The directionality in all three hearing aids tested provided directional benefit compared to omnidirectional for speech originating from in front of the listener.

- For those with severe-to-profound hearing loss, performance with speech originating from in front and competing speech from other directions did not differ for the three hearing aids tested.

- For participants with moderate hearing loss, binaural beamformer in Hearing Aid B did not provide significantly more benefit than Binaural Directionality III when the target speech was in front of the listener, while the binaural beamformer in Hearing Aid A did.

- When the target speech was not in front of the listener, a dramatic advantage of Binaural Directionality III was demonstrated regardless of hearing loss severity.

References

- Cord MT, Surr RK, Walden BE, Olson L. Performance of directional microphone hearing aids in everyday life. Journal of the American Academy of Audiology. 2002; 13: 295-307.

- Cord MT, Surr RK, Walden BE, Ditterberner A. Ear asymmetries and asymmetric directional microphone hearing aid fittings. American Journal of Audiology. 2011; 20: 111-122.

- Groth J. Binaural Directionality II: An evidence-based strategy for hearing aid directionality. 2016; ReSound white paper.

- Groth J. Binaural Directionality III: Directionality that supports natural auditory processing. 2016; ReSound white paper.

- Stender T. Binaural Fusion by ReSound: Technology optimized as nature intended. 2012; ReSound white paper.

- Völker C, Warzybok A, Ernst SMA. Comparing binaural pre-processing strategies III: Speech intelligibility of normal-hearing and hearing-impaired listeners. Trends in Hearing. 2015; 19: 1-18.

- Picou EM, Aspell E, Ricketts TA. Potential benefits and limitations of three types of directional processing in hearing aids. Ear & Hearing. 2014; 35(3): 339-352.

- Nielsen JB, Dau T, Neher T. A Danish open-set speech corpus for competing-speech studies. The Journal of the Acoustical Society of America. 2014; 135(1):407-420.

- Boersma, P., and Weenink, D. “Praat: Doing phonetics by computer (version 5.1.40) [computer program],” 2011; http://www.fon.hum.uva.nl/praat/.

- Wagener K, Josvassen JL, Ardenkjær R. Design, optimization, and evaluation of a Danish sentence test in noise. Journal of International Audiology. 2003; 42: 10-17.

- Brimijoin WO, Whitmer WM, McShefferty D, Akeroyd MA. The effect of hearing aid microphone mode on performance in an auditory orienting task. Ear Hear. 2014; 35(5): e204-e212.

- Best V, Mejia J, Freeston K, van Hoesel RJ, Dillon H. An evaluation of the performance of two binaural beamformers in complex and dynamic multitalker environments. International Journal of Audiology. 2015; 54(10): 727-735.